INTRODUCTION

Both military missions and civilian applications have led to numerous investigations into using teams of collaborating unmanned aerial vehicles (UAVs) to accomplish a complex mission with strongly coupled tasks [1–26]. Given a team goal, these vehicles coordinate their activities to most efficiently and effectively accomplish an autonomous mission. For years, teams of UAVs have been proposed for various military applications, such as serving as wide area search munitions [4]; suppressing enemy air defense systems [8–10]; and conducting intelligence, surveillance, and reconnaissance (ISR) [15–18]. Researchers have also been suggesting UAV teams for civilian applications, such as tracking the shape of a contaminant cloud (e.g., to identify radioactive material release into the atmosphere) [22], monitoring biological threats to agriculture [23], conducting disaster management and civil security [24], and conducting traffic surveillance for sparse road networks [25].

Most current ground stations allow for the upload of waypoints, and by using autonomous controllers on the UAVs, these vehicles can fly a predetermined path without the intervention of a pilot. In most cases, ground stations can determine the correct smooth flight path between the waypoints based on the aircraft’s specifications. Thus, no additional flight planning is needed, only the ability to provide the ground station with waypoints.

Reduced reliance on human operators is the goal of autonomy. However, an alternative/complementary goal of autonomy is to allow the human operator to “work the mission” rather than “work the system” [27]. This statement means that autonomy must support, not take over the decision-making. The Intelligent Tasker software was developed to work alongside a ground station to assist an operator in planning a complex mission using multiple vehicles. The user interface and back-end Genetic Algorithm Optimizer make planning and executing an optimized complex coordinated mission straightforward and uncomplicated for the user. The user designs the mission, and the software determines an optimized way to task the assets and provide the ground station with the waypoints needed to direct the UAVs to accomplish the mission. The software allows for the original tasking of multiple assets and then the retasking of assets in real-time if “pop-up” points of interest arise or an asset is lost. This work has been applied to small fixed-wing UAVs but can easily be applied to other types of aerial, terrestrial, or even marine vehicles, as well as heterogeneous teams [18].

MISSION SCENARIO

The mission example considered here is ISR. Conceivably, this mission could be to provide intelligence for securing the surroundings of an Army base or other specific area. This type of mission is also relevant for many civilian applications in which places or points of interest need to be monitored, such as for border patrol or forest fire detection. In this example, a three- plane scenario was chosen since the small AeroVironment Raven RQ-11 UAV (pictured in Figure 1) is currently deployed in sets of three [28]. The Raven is used by the U.S. Army, Air Force, Marine Corps, and Special Operations Command. Additionally, foreign customers include; Australia, Estonia, Italy, Denmark, Spain, and the Czech Republic. To date, more than 19,000 airframes have been delivered to customers worldwide, making the Raven one of the most widely adopted UAV systems in the world. Even though Ravens are widely fielded in sets of three, there do not seem to be any examples in open literature specifically discussing the teaming of Ravens to complete a mission. The ideas in this article extend the usefulness of having three assets teamed to do a coordinated mission without the necessity for adding additional trained personnel.

Figure 1: Raven RQ-11 Field Set.

In this mission scenario, three planes are launched within minutes of each other (as pictured in Figure 2), to return within minutes of each other. The scenario does not require the fastest time to complete the mission but rather requires that the planes observe the area as long as needed to complete the mission and spend the maximum time over the area of interest (while not exceeding the battery life). In the scenario constructed for this exercise, it was assumed that there is a set of points of interest (POIs), {p1, p2, p3, …, pn}, chosen from a map of the area of interest. These POIs would have priorities, {low, medium, high}, assigned to them based on the threat that they may impose or the importance of the site. The priority level dictates how many times during the mission the site will be visited. Also, loiter times are chosen for the POIs that dictate the length of time the UAV should circle, observing the site during each visit.

Figure 2: Soldier Launching Raven UAV.

THE USER INTERFACE

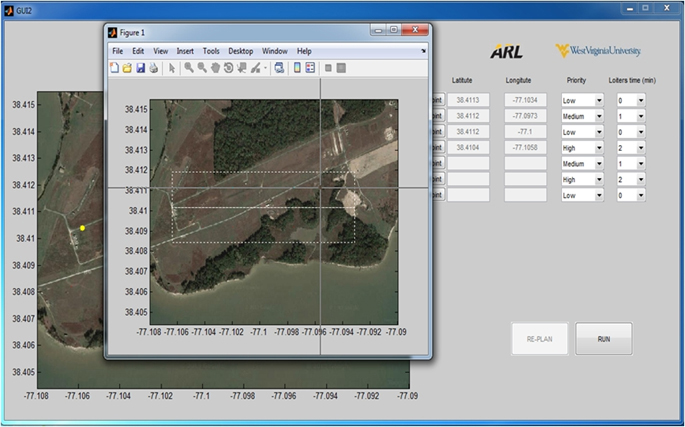

The graphical user interface (GUI) allows a user to easily plan and execute a complex coordinated mission with three UAVs and up to 10 POIs. To begin, an operator chooses a set of POIs and a launch point. The user selects an area of interest from Bing Hybrid Map Provider and indicates how many points of interest in the area are to be visited. Clicking on the map then populates the latitude and longitude of the points and allows the user to specify priority level and loiter time for each (as pictured in Figure 3). From the launch point, the maximum distance a UAV can fly and return within its safe battery life is calculated. Points outside an acceptable range will not be allowed to remain in the list because doing so would result in a mission failure. Points close together (able to be observed at the same time) are clustered for efficiency.

Figure 3: User Interface for Intelligent Tasker Software.

SYSTEM DESIGN

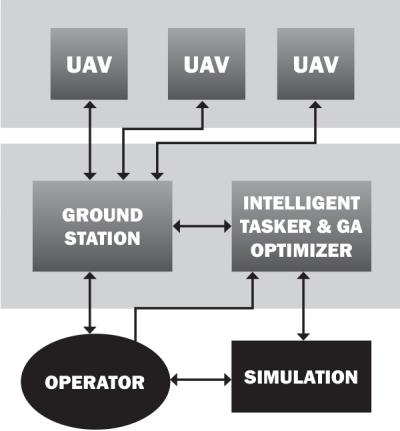

The Intelligent Tasker is designed to work with various ground stations that have autopilot capabilities (e.g., Ardupilot, APM Mission Planner, Corvid) (see Figure 4). The idea is to allow the ground station software to manage multiple autonomous vehicles to complete a coordinated operation. As illustrated in Figure 4, the operator communicates to the Intelligent Tasker to define the mission and runs the simulation to ensure the routes are acceptable. Once the operator determines that the suggested coordinated solution is acceptable, then the plan is made available to the ground station. In the event that retasking is necessary, the ground station will relay information back to the Intelligent Tasker. This information will contain the UAV’s current position, the points of interest that have been visited, and each vehicle’s remaining battery life.

Figure 4: Intelligent Tasker as Part of the UAV System.

Ground stations may have multiple channels to communicate with multiple vehicles. In this case, the ground station will be given an ordered list of coordinates to visit for each of the UAVs. For a ground station with multiple channels, it is possible for one pilot to handle all UAVs during the mission, as demonstrated in Darrah et al. [17]. If the ground station only has one channel to communicate with a UAV, then multiple instances of the ground station may need to be running, one for each vehicle, and the Intelligent Tasker will provide the ordered list of points to visit to the appropriate instances of the ground station.

The UAVs as part of this system only communicate with the ground station. The UAVs are launched and put in a holding pattern over the launch site until the coordinated tasking received from the Intelligent Tasker is uploaded from the ground station to each UAV. To ensure vehicles do not collide, the planes are flown with a vertical separation. When retasking takes place for the purpose of either adding an additional point of interest or continuing the mission after one asset has been lost, the UAVs are given the command to again enter the holding pattern where they are located, send their position to the ground station, communicate battery life remaining, and indicate waypoints they have left to visit. The new plan will take into account all taskings that still need to be completed, as well as the new positions of the vehicles.

GENETIC ALGORITHM OPTIMIZER

A genetic algorithm (GA) is a search algorithm based on the mechanics of natural selection and natural genetics [29]. In our software, the GA Optimizer is used to look for the optimal task assignment of UAVs during the mission. The GA Optimizer employees the usual components of a GA, such as a fitness function developed for a particular scenario, chromosomes that represent the solutions to the problem, crossover that is used to develop new solutions from existing solutions, mutation to ensure that the GA does not get struck in a local optimum, and elitism to ensure the solution never degrades. These components work together to quickly provide an optimized solution in the form of a task list for each UAV. Other methods have been employed for the tasking problem [5–9]; however, the GA has proven to be the most versatile and scalable type of solution. A fitness function can be developed for individual mission scenario, and the solution space for each individual type of problem can be encoded as a set of chromosomes. For complete details on how the GA works, as well as various examples, see Darrah et al. [16, 17] and Eun and Bang [18].

FLIGHT TESTING

Testing of this technology was performed at the U.S. Army Research Laboratory (ARL) Blossom Point Research Facility, near La Plata, MD. This 1,600-acre site offers a UAV test area that is 2 miles long by ½ mile wide. The facility is classified as a range and as such is closed to the public. The location also maintains a runway and a command-and-control area, which facilitates take-off and landing as well as UAV observation during the experiments.

During the flight demonstration phase, the three planes were launched one at a time using manual control to take them to desired altitude and then switched to autonomous mode, where they began to circle at their home loiter position. The team could not acquire a set of Raven RQ-11 UAVs (which cost approximately $300,000) for testing, so planes of similar size, shape, and payload capability as the Raven were used. Three PROJET RQ-11 model airframes (pictured in Figure 5) were outfitted with the necessary radios, sensors, and control computers to fly autonomously. For command-and-control functions, a FreeWave MM2 900-MHz was installed in each aircraft as well as the ground control station (GCS). Video was captured from each plane by a HackHD camera mounted inside the fuselage with the lens flush with the airframe. Video was transmitted in real-time to the ground using a Stinger Pro 5.8-GHz transmitter. The video was received on the ground via a YellowJacket Pro 5.8-GHz receiver integrated with the GCS. Each aircraft also had a MediaTek GPS module integrated for position sensing.

Figure 5: Model Planes Used as Surrogate for Raven RQ-11.

The GCS employed for testing demonstrated consisted of a Futaba 9C remote controller and a Linux-based laptop. The Futaba was used by the pilot to directly command the UAVs during takeoff, landing, and any contingency operations. Additionally, it acted as the main communication node between the planes and the ground, except for video. The Linux-based laptop was used for telemetry monitoring, situational awareness, and mission status observation. The Linux laptop communicated with the GA laptop used to calculate new mission plans. Once the mission plan was determined by the Intelligent Tasker, this plan was transmitted via Ethernet from the GA-based system laptop to the Linux-based laptop for review, and then transmitted via WiFi to the Futaba 9C communications package for final transmittal to the in-flight UAV team.

As the three planes were being launched, one of the Army personnel assembled to observe the demonstration was chosen to enter a set of POIs and associated priorities into the Intelligent Tasker, and an optimized coordinated mission was devised and communicated to the ground station. Once all three planes were in autonomous mode circling at the home loiter position, they were given their mission assignments from the ground station. At this point, the UAVs all flew off in autonomous mode in different directions to complete their part of the mission. After completion of their task list (visiting specific POIs in a specified order), they returned to the home loiter position to await further tasking or to be taken over and manually landed. The flights were observed on monitors that were used to track the movements of the UAVs and also view the video feeds that were being sent back from the UAVs’ onboard cameras. This monitoring verified that the UAVs found the designated POIs.

CONCLUSIONS

Many complex military and civilian applications necessitate the use of teams of unmanned assets to accomplish diverse tasks. The goal for using a team of assets should be to allow the human operator to “work the mission” and not have to be concerned about the details of how to choose an optimal way to accomplish all the tasks. This means that intelligent algorithms and autonomy must support, not take over the decision-making. The Intelligent Tasker user interface makes it easy for a single operator or small group of operators to plan and execute a sophisticated mission with little effort. The GA Optimizer finds an optimal way to assign tasks to assets. The Intelligent Tasker is an add-on, not a replacement, to existing systems that uses existing autonomous controllers and ground stations to allow a complex mission to be carried out by one operator or a few operators in a supervisory capacity. This technology can provide a new way to maximize the use of UAVs in the field and is flexible enough to be applied to many diverse mission scenarios and types of assets (ground, aerial, terrestrial, or even marine vehicles, as well as heterogeneous teams). It can also reduce the number of required trained personnel, thus saving time, money, and possibly lives.

Acknowledgments:

This work has been supported by the U.S. Army Research Laboratory under Cooperative Agreement no. W911NF-10-2-0110.

References:

- Richards, A., J. Bellingham, M. Tillerso, and J. P. How. “Coordination and Control of Multiple UAVs.” Proceedings of AIAA Guidance, Navigation and Control Conference, Washington, DC, 2002.

- Chandler, P. R., M. Pachter, S. J. Rasmussen, and C. Schumacher. “Multiple Task Assignment for a UAV Team.” Proceedings of the AIAA Guidance, Navigation and Control Conference, AIAA 2002-4587, Monterey, CA, 2002.

- Flint, M., M. Polycarpou, and E. Fernandez-Gaucherand. “Cooperative Path Planning for Autonomous Vehicles Using Dynamic Programming.” Proceedings of the 15th Triennial IFAC World Congress, Barcelona, Spain, pp. 481–487, 2002.

- Schumacher, C., P. Chandler, and S. Rasmussen. “Task Allocation for Wide Area Search Munitions with Variable Path Lengths.” Proceedings of the American Control Conference, Denver, CO, June 2003.

- Schumacher, C. J., P. R. Chandler, M. Pachter, and L. Pachter. “Constrained Optimization for UAV Task Assignments.” Proceedings of the AIAA Guidance, Navigation and Control Conference, Washington, DC, 2004.

- Shima, T. S., S. J. Rasmussen, and A. G. Sparks. “UAV Cooperative Control Multiple Task Assignments Using Genetic Algorithms.” Proceedings of the American Control Conference, Portland, OR, 2005.

- Shima, T. S., and C. J. Schumacher. “Assignment of Cooperating UAVs to Simultaneous Tasks Using Genetic Algorithms.” Proceedings of the AIAA Guidance, Navigation, and Control Conference and Exhibit, San Francisco, CA, 2005.

- Darrah, M. A., W. Niland, and B. Stolarik. “Multiple UAV Dynamic Task Allocation Using Mixed Integer Linear Programming in a SEAD Mission.” Proceedings of AIAA Infotech@Aerospace Conference, Alexandria, VA, 2005.

- Darrah, M., W. Niland, and B. Stolarik. “Multiple UAV Task Allocation for an Electronic Warfare Mission Comparing Genetic Algorithms and Simulated Annealing.” DTIC Online Information for the Defense Community, ADA462016, 2006.

- Darrah, M., W. Niland, B. Stolarik and L. Walp. “UAV Cooperative Task Assignments for a SEAD Mission Using Genetic Algorithms.” Proceedings of Guidance, Navigation and Control Conference, Keystone, CO, August 2006.

- Darrah, M., and W. Niland. “Increasing UAV Task Assignment Performance Through Parallelized Genetic Algorithms.” Proceedings of Infotech@Aerospace Conference, Rohnert Park, CA, 2007.

- Shima, T., S. J. Rasmussen, A. G. Sparks, and K. M. Passino. “Multiple Task Assignments for Cooperating Uninhabited Aerial Vehicles Using Genetic Algorithms.” Computers & Operations Research, vol. 33, pp. 3252–3269, 2006.

- Beard, R. W., T. W. McLain, D. B. Nelson, D. Kingston, and D. Johanson. “Decentralized Cooperative Aerial Surveillance Using Fixed-Wing Miniature UAVs.” Proceedings of the IEEE, vol. 94, issue 7, 2006.

- Matlock, A., R. Holsapple, C. Schumacher, J. Hansen, and A. Girard. “Cooperative Defensive Surveillance Using Unmanned Aerial Vehicles.” Proceedings of the American Control Conference, St. Louis, MO, 2009.

- Darrah, M., E. Fuller, T. Munasinghe, K. Duling, M. Gautam, and M. Wathen. “Using Genetic Algorithms for Tasking Teams of Raven UAVs.” Journal of Intelligent & Robotic Systems, vol. 70, issue 1, pp. 361–371, 2013.

- Darrah, M., J. Wilhelm, T. Munasinghe, K. Duling, E. Sorton, S. Yokum, M. Wathen, and J. Rojas. “A Flexible Genetic Algorithm System for Multi UAV Surveillance: Algorithm and Flight Testing.” Unmanned Systems, vol. 3, no. 1, pp. 1–14, 2015.

- Darrah, M., L. Pullum, S. Beck Roth, B. Gilkerson, and E. Taipale. “Using Genetic Algorithms for Robust Tasking of Multiple UAVs with Diverse Sensors.” Proceedings of AIAA Infotech@Aerospace Conference, Seattle, WA, April 2009.

- Eun, Y., and H. Bang. “Cooperative Task Assignment/Path Planning of Multiple Unmanned Aerial Vehicles Using Genetic Algorithms.” Journal of Aircraft, vol. 46, no. 1, pp. 338–343, 2009.

- Karaman, S., T. Shima and E. Frazzoli. “Task Assignment for Complex UAV Operations Using Genetic Algorithms.” Proceedings of the AIAA Guidance, Navigation, and Control Conference, Chicago, IL, 2009.

- Zuo, Y., Z. Peng and X. Liu. “Task Allocation of Multiple UAVs and Targets Using Improved Genetic Algorithm.” Proceedings of the 2nd International Conference on Intelligent Control and Information Processing, pp. 1030–1034, 2011.

- Edison, E., and T. Shima. “Integrated Task Assignment and Path Optimization for Cooperating Uninhabited Aerial Vehicles Using Genetic Algorithms.” Computers & Operations Research, vol. 3, no. 8, pp. 340–356, 2011.

- Sinha, A., A. Tsourdow, and B. White. “Multi UAV Coordination for Tracking the Dispersion of a Contaminant Cloud in an Urban Region.” European Journal of Control, vol. 15, issues 3–4, pp. pp. 441–448, 2009.

- Techy, L., C. A. Woolsey, and D. G. Schmale III. “Path Planning for Efficient UAV Coordination in Aerobiological Sampling Missions.” Proceedings of the 47th IEEE Conference on Decision and Control, Cancun, Mexico, December, 2008.

- Maza, I., F. Caballero, J. Capitan, J. R. Martinez-de-Dios, and A. Ollero. “Experimental Results in Multi-UAV Coordination for Disaster Management and Civilian Security Applications.” Journal of Intelligent & Robotic Systems, vol. 61, issue 1, pp. 563– 585, 2011.

- Liu, X. F., Y. Q. Song, Z. W. Guang, and L. M. Gao. “A UAV Allocation Method for Traffic Surveillance in Sparse Road Network.” Journal of Highway and Transportation Research and Development, vol. 7, issue 2, pp. 81–87, 2013.

- Girard, A. R., A. S. Howell, and J. K. Hedrick. “Border Patrol and Surveillance Missions Using Multiple Unmanned Air Vehicles.” Proceedings of the 43rd IEEE Conference on Decision and Control, Paradise Island, Bahamas, 2004.

- U.S. Department of Defense. “Unmanned Systems Integrated Roadmap 2011–2036.” Reference Number 11-S-3613, http://www.acq.osd.mil/sts/docs/Unmanned%20Systems%20Integrated%20Roadma…, accessed August 2016.

- Airforce-technology.com. “RQ-11B Raven Unmanned Air Vehicle (UAV), United States of America.” http://www.airforce-technology.com/projects/rq11braven/, accessed August 2016.

- Goldberg, D. E. Genetic Algorithms in Search, Optimization, and Machine Learning. Reading, MA: Addison-Wesley, 1989.