INTRODUCTION

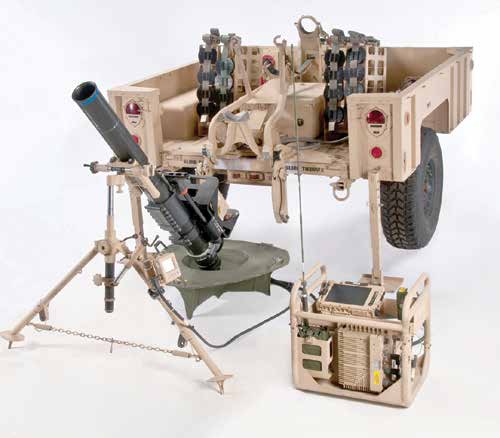

In response to rapid advancements in the fields of autonomy, artificial intelligence, and machine learning, the Department of Defense (DoD) has prioritized the development and integration of autonomous systems (such as the MultiUtility Tactical Transport [MUTT] shown in Figure 1). According to the Defense Science Board’s 2016 Summer Study on Autonomy [1], “The DoD must accelerate its exploitation of autonomy— both to realize the potential military value and to remain ahead of adversaries who will also exploit its operational benefits.” Successful human-machine teaming will dramatically improve the speed with which the military collects, analyzes, and responds to data. This capability is critical to maintaining the tactical edge against increasingly tech-enabled adversaries. However, to fully realize autonomy’s battlefield potential, the military must first cultivate trust in autonomous systems down to the lowest tactical level.

Figure 1: MUTT (Source: Cpl. Levi Shultz, U.S. Marine Corps).

THE WARY WARFIGHTER

The vast majority of military applications for autonomy are likely to be non-lethal. Fields such as intelligence, operational planning, logistics, and transportation anticipate highly capable non-lethal systems within the next decade. The private sector has already pioneered many of these applications, most notably with self-driving cars (such as Google’s Waymo [see https://waymo.com/) and autonomous warehouse robotics (such as Amazon’s Kiva [see www.amazonrobotics.com). As those technologies become increasingly commercially available, military members are likely to invest more trust in autonomous systems with comparable applications inside the DoD. When it comes to frontline combat, however, there is no commercial equivalent; the battlefield is fluid, dynamic, and dangerous. As a result, Warfighter demands become exceedingly complex, especially since the potential costs of failure are unacceptable. The prospect of lethal autonomy adds even greater complexity to the problem. And without comparable applications for autonomy in the commercial sector, Warfighters will have no prior experience with similar systems. Developers will be forced to build trust almost from scratch.

For these reasons, the majority of infantry personnel—who rarely interact with computers in an operational setting—continue to believe that autonomy on the battlefield is more science fiction than fact. The state of current developmental ground technologies has made Warfighters even more circumspect. Loud and cumbersome robots supported by teams of engineers have led Warfighters to distrust/dislike many developing technologies (such as the Big Dog robot shown in Figure 2, which the Marines felt was too noisy for use in combat) as well as question whether there will ever be a tactical role for autonomy. When one talks with enlisted personnel about their aversion to autonomy on the battlefield, four main reasons for this resistance emerge:

- Warfighters often have little understanding of autonomy and its enabling technologies.

- Warfighters are concerned about their ability to communicate and collaborate with autonomous systems.

- Warfighters are concerned about increases to their logistical burden.

- Warfighters are concerned about operational safety.

Figure 2: Big Dog (DARPA Photo).

Accordingly, building trust in autonomous systems, whether or not they are weaponized, is likely to be especially challenging at the tactical level. To ensure that future autonomous systems meet user needs—and that these systems are actually employed— the DoD must recognize and address low-level Warfighter concerns. In particular, developers must understand small-unit trust relationships, tailor systems to fit into that social environment, educate Warfighters on “the technology, and maintain collaborative relationships with end users going forward.

DEFINING AUTONOMY

It is important to define autonomy, especially as distinguished from automation. The Defense Science Board defines automation as “[a system] governed by prescriptive rules that permit no deviations [1].” Autonomy, on the other hand, is defined as a system with “the capability to independently compose and select among various courses of action to accomplish goals based on its knowledge and understanding of the world, itself, and the situation.”

The key distinction here is the ability of a machine to think or reason on its own. The vast majority of Warfighters are accustomed to working with automation—that is, they are used to machines executing narrowly defined tasks. For example, the Mortar Fire Control System (MFCS) (such as the one shown in Figure 3) is an automated system that receives targeting data from its user and adjusts a mortar tube for enhanced accuracy [2]. The targeting data are generated by human forward observers; the MFCS simply calculates the charge and trajectory required to hit that target. Automated systems such as the MFCS still require a level of Warfighter trust; but because users are responsible for all inputs, the system’s scope is relatively narrow, and the outputs are defined, users generally trust the system once it is initially proven to work.

Figure 3: MFCS (Picatinny Arsenal Photo).

By contrast, a Perdix unmanned aerial vehicle (UAV) (shown in Figure 4) is an autonomous system that interacts in a swarm with dozens of other independent UAVs. Rather than following strict rules, such as a pre-programmed flight pattern, Perdix UAVs share sensor data and come to collaborative navigation decisions [3].

Figure 4: Perdix UAV (DoD Photo)

The ability for the swarm to both collect information and make decisions means that a single human can manage hundreds of a UAVs with relative ease. Of course, it also raises concerns among potential users that the swarm may “decide” to do something counter to the human controller’s intent. Users may not always understand the mechanisms behind the decision-making process, which makes them wary to trust the machine’s judgement. And without a “human element” built into the decision cycle, there is also a fear that machines will make inappropriate decisions with potentially lethal consequences.

These hurdles are not insurmountable, but they do suggest that Warfighters may require much more communication and assurance from autonomous systems than they require from automated systems. Recognizing what information to share and how best to share it is important. Cohesive small units often develop intuitive communication networks; individuals learn to anticipate one another’s needs and push information as appropriate. Fitting an autonomous system into this mix will thus first require developers to understand the social dynamics within a team.

TRUST IN SMALL UNITS

Small-unit cohesion has long been considered the backbone of military success. Warfighters going back centuries have been made to live, eat, and work together in small teams to build close personal ties and improve resiliency and communication on the battlefield. Cohesion is directly correlated with lethality. As French military theorist Charles Ardant du Picq observed, “Four brave men who do not know each other will not dare to attack a lion. Four less brave, but knowing each other well, sure of their reliability and consequently of mutual aid, will attack resolutely [4].”

The development of unit cohesion is dependent on more than just friendship. In fact, small military teams are united first and foremost by task cohesion, which is “a shared commitment among members to achieving a goal that requires the collective efforts of the group.” It is during the struggle to accomplish those tasks that Warfighters then develop social cohesion, or the enjoyment of each other’s company. Mutually shared hardships, such as combat or field exercises, give birth to “altruism and generosity that transcend ordinary individual selfish interests.” These displays of selflessness validate and enhance existing relationships, making the development and maintenance of unit cohesion a constantly iterative process [5].

Unit cohesion is a reflection of interpersonal trust. Trust between military team members depends on one another’s proficiency, predictability, and genuine concern. Proficiency gives Warfighters confidence that their leaders, peers, and subordinates can and will contribute meaningfully toward unit goals. Predictability allows team members to anticipate one another’s actions and synchronize efforts. Genuine concern gives Warfighters the assurance that the team cares for their well-being and will come to their assistance when required.

Only when these three characteristics converge do Warfighters feel comfortable trusting each other in an operational setting. As trust researcher F. J. Manning has noted, “soldiers can and do distinguish between likability and military dependability, choosing different colleagues with whom to perform a risky mission and to go on leave [5].”

The majority of infantry personnel—who rarely interact with computers in an operational setting—continue to believe that autonomy on the battlefield is more science fiction than fact.

When arriving at a new unit, Warfighters are afforded a certain level of confidence simply because they share a common identity with the rest of the team. Identity-based trust makes it easier for small units to integrate new members quickly by allowing them to assume a common set of skills and experiences [6]. Training then builds task cohesion and gives Warfighters the opportunity to win personal trust by demonstrating their individual proficiency, predictability, and genuine concern. When Warfighters interact consistently and prove themselves trustworthy both personally and professionally, social cohesion then tightens the knot. While not strongly correlated with combat performance, social cohesion is associated with job satisfaction, morale, well-being, and psychological coping.

Strong task and social cohesion give Warfighters much more patience for mistakes, but the trust can still be broken. The key to maintaining trust after failure is forgiveness, which is often dependent on a cohesive narrative explaining the failure. For example, if a Marine fails to get into position to provide suppressing fire during an attack, the team may question whether the Marine is proficient or reliable enough to be trusted in the future. However, if the Marine can explain that he/she was fixed in position by fire, or even that he/she had tripped and injured himself/herself, team members can empathize with the experience and forgive the mistake. Only if the Marine consistently makes similar mistakes, or fails to show remorse, does a team’s patience begin to wane.

In summary, military teams are united by shared tasks, solidified by personal interaction, and kept together by the willingness to forgive. The process for building trust in autonomous systems will not look the same, but there are elements of overlap. Once developers are aware of a team’s human interactions, they can better discern how autonomy will fit into that picture.

BUILDING TRUST

Autonomous systems cannot and should not be expected to win trust in the same way as humans. Warfighters, at least in the short term, will not invest identity based trust into an autonomous system because they have no ability to relate on common hardships, frustrations, and experiences. Additionally, autonomous systems cannot empathize, so they are unable to demonstrate genuine concern in any believable or meaningful way.

Finally, autonomous systems will not be integrated into a unit’s social network because they are unable to provide the companionship that correlates with improved quality of life.

Autonomous systems cannot contribute to social cohesion, but they can build task cohesion, which is statistically more important to combat effectiveness. Human-machine teaming requires users and autonomous systems to align goals and synchronize actions. As with humans, autonomous systems will build trust by performing well in training. For autonomy, that means demonstrating proficiency and predictability as least as well as, though ideally much greater than, a high-performing human.

Proficiency for an autonomous system is the ability to execute assigned tasks in a way that enhances small-unit combat power. This means that, in addition to succeeding on specific tasks (such as identifying targets), autonomous systems also need to be able to move and function on the battlefield without imposing cognitive or physical burdens on the team. It is not enough for autonomous systems to simply recognize meta-objectives (such as “seize a building”); they must also infer micro-objectives (such as “provide suppressing fire,” “take cover,” and “remain quiet”).

A system must also be predictable enough for humans to anticipate its behavior. Human members of highly functional teams intuitively provide each other with information and resources based on their ability to understand the situation and predict one another’s responses. This action allows them to synchronize without extensive communication. Autonomous systems must likewise provide the same level of consistency. These systems will also need to anticipate human actions and communicate their intentions without overwhelming end users.

Autonomous systems need to know when and how to push information to other individuals or to the squad as a whole without overwhelming them.

If autonomous systems can demonstrate the ability to reliably execute specified and implied tasks without becoming burdensome, they can win an initial amount of human trust. However, that initial trust can be destroyed in an instant if the system fails. Maintaining trust in autonomy is different than simply winning it in the first place. That accomplishment requires systems to provide enough value to feel worth “forgiving” and to communicate the reason for failure in a way that can be understood by the end user. It also requires end users to be familiar enough with the technology to recognize how certain components work, why they might fail, and how the unit might be able to help avoid failure in the future.

WARFIGHTER ENGAGEMENT

Maintaining trust requires developers to build autonomous systems that are adaptable to specific user requirements. Because most Warfighters at the tactical level have no experience working with autonomy, it will initially be difficult for them to give useful and accurate feedback on aspects such as user interfaces and communication methods. Engineers and Warfighters will thus need to maintain collaborative relationships to identify and act on opportunities for improvement as the technology evolves.

As previously mentioned, Warfighters need systems that can execute assigned tasks and share information in appropriate ways without becoming logistical burdens or safety hazards. There are no strict definitions of those requirements. Rather, the requirements are dependent on how Warfighters prefer to share information and how they calculate the tradeoffs between an autonomous system’s logistical requirements, safety hazards, and tactical value.

Engaging with the end user is vital to designing a system that strikes the optimal balance between value and cost. When discussing autonomy with potential end users, it is helpful to focus on four main areas: (1) user understanding of the technology, (2) communication with the system, (3) logistical requirements, and (4) operational safety.

User Understanding of the Technology

Most Warfighters at the tactical level are unfamiliar with the inner workings of military technology. For these users, systems are often delivered with little technical explanation and are thus treated as black boxes. While this situation may be acceptable for automated systems, it will inhibit a Warfighter’s ability to trust an autonomous system’s reasoning and decision-making. It is thus important when engaging with end users to ask them how much they understand.

If an autonomous system relies on computer vision and machine learning, Warfighters need to understand both of those concepts, at least at a basic level. If a system relies on global positioning system (GPS) technology, Marines need to know the expected margin of error.

Explaining the technology can go far in developing trust because it helps Warfighters to calibrate their expectations to the actual abilities of the system. Additionally, once they understand the capability set, Warfighters will begin recommending applications, giving developers valuable insight into how Warfighters think about the technology and where it might be most useful in their current operations.

Communication With the System

Complex operational environments impose a tremendous cognitive workload on infantry personnel. Individuals may be scanning for enemy indicators, adjusting their position in a formation, navigating unfamiliar terrain, and identifying key terrain for cover and concealment, all at the same time. Warfighters in high-trust teams develop an intuitive understanding of how much information their peers require and how best to deliver it. Likewise, autonomous systems need to know when and how to push information to other individuals or to the squad as a whole without overwhelming them.

Not surprisingly, there is no single communication solution. Autonomous systems should allow teams to shape the way they communicate across a range of possible scenarios. Squad leaders may be receptive to lots of information on one mission and overwhelmed by the same amount on the next. Warfighters may require more information from a new system than one that has been in use for several weeks. And some squads may prefer audible communication while others prefer text communication.

The key is to communicate information as simply as possible. Messages should be short and explicit, with the opportunity for users to engage further if more information is needed. Additionally, Warfighters should be able to customize aspects such as fonts types, font sizes, voices, volumes, and accents, which are standard settings on their cell phones.

Ironically, autonomy integration is, in large part, a human problem; and it will thus require human users to be at the center of the development process.

In communicating information about its state, an autonomous system also needs to be able to self-diagnose, recommend solutions, and, when possible, self-correct. Human actors on the battlefield do this frequently; Marines are trained to respond to injuries by alerting teammates, recommending a course of action, and applying self-aid. Autonomous systems that are capable of the same actions will remove much of the maintenance and tracking burden from human team members.

Logistical Requirements

Small units are stretched thin by the amount of equipment they are required to manage. Warfighters are resistant to any additional weight or responsibility that does not contribute significantly to combat power. Thus, batteries, fuel, radios, remote controllers, and other components are important considerations when it comes to introducing autonomous systems to ground combat units. Depending on a system’s perceived value, and the mission set for which it is meant, Warfighters may opt to leave the system at home rather than incur the added weight. Additionally, systems that are too heavy or difficult to move by hand may go unused (or underused) if Warfighters are concerned that they will fail in the field.

Developers need to be cognizant of how a system’s support requirements impact small-unit logistics. If Warfighters are hesitant about the logistical burden, it is a signal that developers either need to prove the system is invaluable or attempt to slim down the support requirements. Systems that require extensive logistical support can serve to reduce trust in autonomy if Warfighters believe that the burden will negatively impact combat power. Warfighters should be consulted on the weight and size of systems, their components, and their fuel requirements. If the logistical requirements are too great for the end user, the technology should be tabled until better solutions are available.

Operational Safety

Warfighters will accept a certain amount of risk if the payoff creates an asymmetric advantage on the battlefield. Once they understand the technology, how to communicate with it, and what its logistics train entails, they will make an informal assessment on whether or not it is worth the reward. Developers need to understand these assessments. If Warfighters do not believe a system is worth employing, they will be even more unlikely to forgive that system when it fails.

The threshold for safety increases dramatically if an autonomous system is weaponized. Many Warfighters have a strong aversion to incorporating autonomous weapons into their operations, especially on the ground. To win trust and build confidence in autonomy, developers should focus on autonomous surveillance, reporting, and load-bearing systems with lower risk factors first, and then integrate weapons after Warfighters have become familiar with the technology. Additionally, developers should be careful of designs that could roll over, crush, or otherwise injure Warfighters in an operational setting. Any safety incidents early in the integration process could become a serious hindrance to further integration.

CONCLUSION

Robotics and autonomous systems will inevitably have a place on the future battlefield. Winning trust in those systems now is a matter of educating Warfighters on the technology and working closely with them to ensure that their initial experiences are intuitive, safe, and fruitful. Ironically, autonomy integration is, in large part, a human problem; and it will thus require human users to be at the center of the development process. By paying specific attention to user needs, developers can ensure that systems provide indisputable value and that users trust the systems and the development teams behind them.

References:

- [David, R. A., and P. Nielsen. “Defense Science Board Summer Study on Autonomy.” doi:10.21236/ad1017790, June 2016.

- Calloway, A. “Picatinny Provides Soldiers With Quicker, Safer Mortar Fire Control System.” https://www.army.mil/ article/70122/Picatinny_provides_Soldiers_with_quicker__safer_mortar_fire_control_system, accessed 25 July 2017.

- The Strategic Capabilities Office, Office of the Secretary of Defense. “Perdix Fact Sheet.” https://www. defense.gov/Portals/1/Documents/pubs/Perdix%20 Fact%20Sheet.pdf, accessed 24 July 24 2017.

- Charlton, James (ed.). The Military Quotation Book, Revised for the 21st Century. New York: St. Martin’s Press, 2013.

- MacCoun, R. J., and W. M. Hix. “Unit Cohesion and Military Performance, in Sexual Orientation and U.S. Military Personnel Policy: An Update of RAND’s 1993 Study.” RAND, 2010.

- Adams, B. D., and R. D. Webb. “Trust in Small Military Teams.” Defence and Civil Institute of Environmental Medicine (Canada), http://www.dodccrp.org/events/7th_ ICCRTS/Tracks/pdf/006.PDF, accessed 2003.